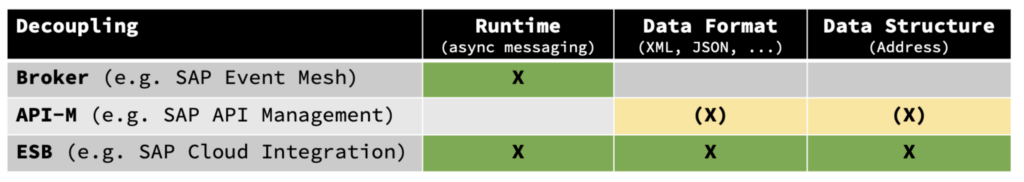

We often hear “we need a messaging broker to be able to decouple applications”. Moving away from Point-To-Point-Integration is really great to remove the dependencies between systems. But what does decoupling (or loose coupling) mean and which dimensions does it bring?

Here´s an overview (for more check out this video from AWS re:invent by Gregor Hohpe):

Among all different types of dependencies we look at the most important ones:

- Runtime dependencies can be solved with asynchronous messaging, where the sender (system) can submit a message to the middleware and this is independent from the availability of the receiver.

- Data Format dependencies, where both systems have to speak the same technical language (XML/JSON/CSV/…). The decoupling takes place via converters. In API-M this can be solved with policies.

- Data Structure dependencies look at structural, technical and semantic differences of the fields and elements, which can include

- the structure and arrangement of field names in a message (e.g. ZipCode vs. ZIP),

- the data type behind a field (e.g. string/int, null/empty),

- the semantic content of a field, e.g. the country code (DE vs. GER)

The decoupling done with mappings like XSLT (for XML) or scripting/programming or via graphical mapping tools. In API-M it can be also done via policies, but we would not recommend that way.

Going further in this article, let´s even combine Data Format and Data Structure into Message Format (for simplification). SAP calls this “aligned APIs”, when sender and receiver speak the same language and where no mediation is required through a middleware (which is performing transformations, message mappings and even protocol switch).

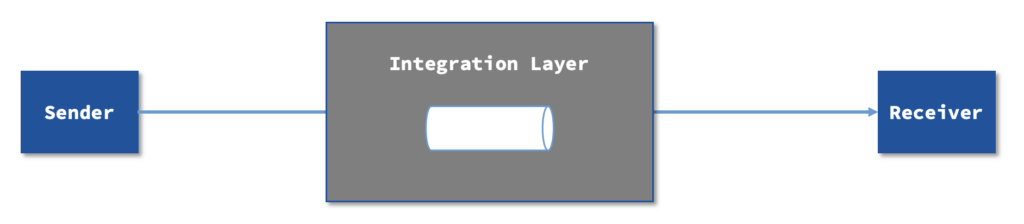

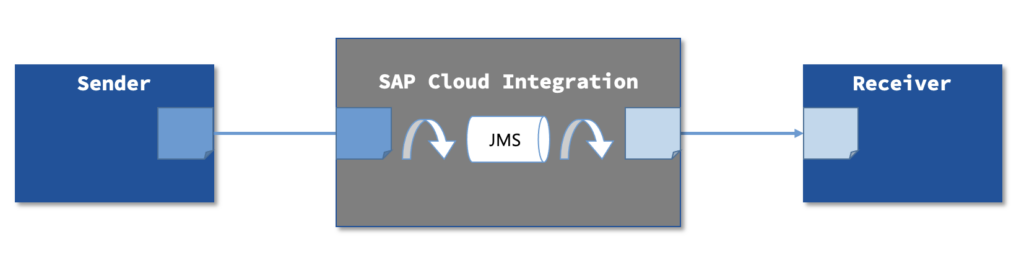

A broker is decoupling runtime dependencies through this pattern:

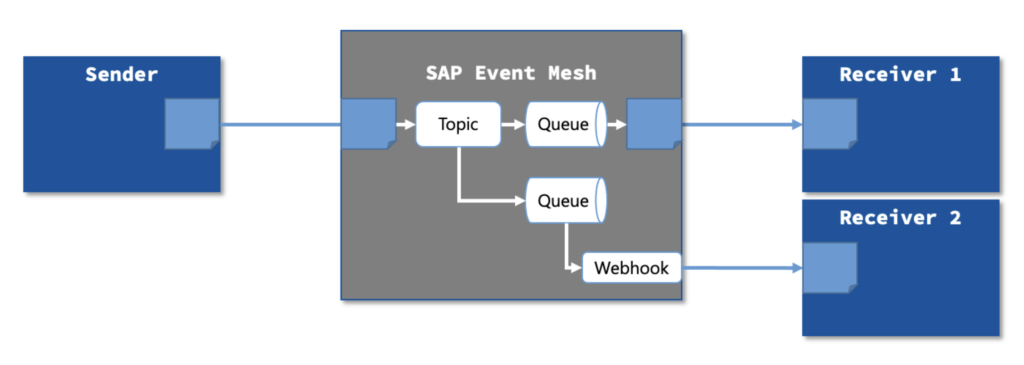

There are many brokers available in the market, from our experience we see mainly those below, who serve this main purpose, but have different implementation approaches of course. They typically handle (only) protocols like AMQP, MQTT or plain HTTP. Messages are being exchanged through topics and/or queues and sometimes work with Webhooks (to push messages to consumers).

- Rabbit MQ

- Kafka (Confluent)

- Solace

- SAP Event Mesh, SAP Advanced Event Mesh (technology: Solace)

- Microsoft Service Bus

- AWS SQS

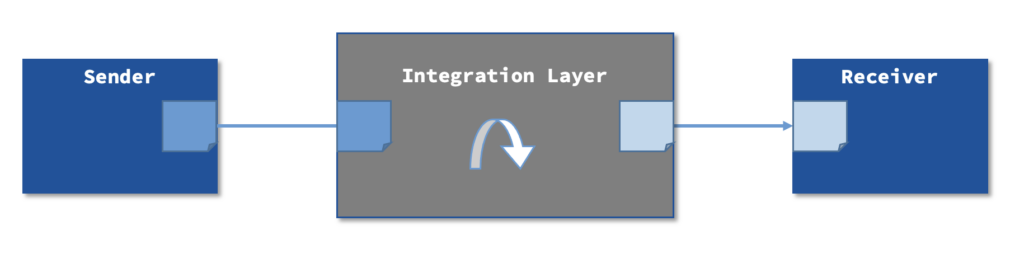

An ESB is decoupling Message Format dependencies through this pattern:

The main focus is to transform messages from one format to another. One can argue, this is creating a lot of “mediated point-to-point” integrations (which is true), but what is the alternative? Mediation here brings transparency and a clear approach to map the 2 different message formats. Solving this with a canonical data model, where all applications speak the same language is a nice theory, but the reality is hard: Each application has to map to this canonical data model with its own programming technique (which is rarely better)… In the end, connecting the dots (aligned message formats) through API Management or decoupled through a broker brings a mediated point-to-point landscape as well (at least from a connectivity point of view)!

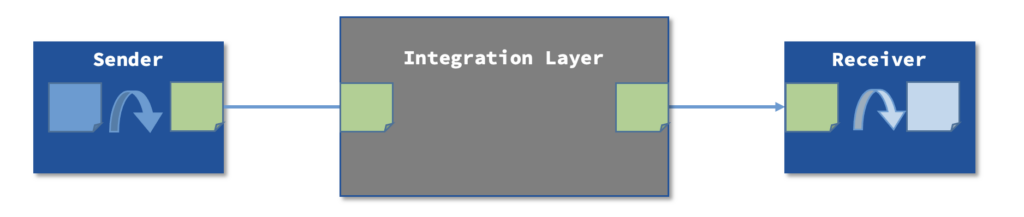

Actually SAP Cloud Integration, as an ESB, can decouple in all areas.

- There are standard transformers and converters to support Data Format Decoupling (JSON <-> XML <-> CSV), Zip/Unzip, Decode/Encode and Data Structure Decoupling (XSLT Mappings, Groovy/JavaScript Mappings, (graphical) Message Mappings in a low-code fashion.

- What many people forget: SAP Cloud Integration can also decouple the runtime through queuing mechanisms like JMS or Data Store. For more information on queuing check out this article. The JMS-Queuing feature is technically based on SAP Event Mesh, which is a white-label product of Solace!

Do I still need a Broker?

SAP Event Mesh can connect directly with event consumers or producers through AMQP, MQTT, JMS or HTTP, such as SAP S/4HANA, where a lot of events are available meanwhile (see SAP API Business Hub), so there is a natural fit. But choosing a broker in all asynchronous messaging depends on the use case.

PubSub

The typical integration pattern is PubSub (Publish & Subscribe). You can easily configure a connection between different event producers and event consumers through topics and queues (or Webhooks where needed). This makes sense, if you dispatch the same event (message) to different receivers. However, please keep in mind, that you can send messages to multiple receivers as well by configuring this in a low-code environment.

Replay

Another aspect is a replay capability which is available with brokers like Kafka, Solace or SAP Advanced Event Mesh. You can go back e.g. 2 days and replay all messages that were processed through a particular topic or queue (typically for one receiver only).

Additional Aspects

Brokers for Event-Driven Architectures serve the purpose of handling large volumes (but with a limited message size) in a scalable, low-latency way to decouple and ensure reliable messaging.

All of this can be achieved with SAP Cloud Integration, but with a higher price tag. SAP Cloud Integration is depending on the amount of messages (and volume: 1 message = 256 KB, so 1 MB = 4 messages), whereas SAP Event Mesh is licensed by bandwith in GB. The calculation (and comparison) can be done in the SAP discovery center.

When do you need a broker then of you have an ESB already?

- If your event producer/consumer speaks natively with a broker (e.g. via AMQP/MQTT)

- If you need a distribution model to multiple receivers and you want to implement a PubSub pattern

- If you need replay capabilities

- If you need to ensure FIFO (First-In-First-Out)/EOIO (Exactly-Once-In-Order) which is not (yet) available in SAP Cloud Integration

- For high-volume messaging (large volumes with high throughput, e.g. in IoT-scenarios or custom-developed mobile apps to process small messages from sensors or real-time analytics and eventing)

Conclusion

You can achieve all aspects of decoupling with an ESB/iPaaS like SAP Cloud Integration. You might consider brokers like SAP Event Mesh for transparency reasons using PubSub when integrating multiple receivers or when you have the need for replay capabilites (SAP Advanced Event Mesh, Solace, Kafka). You can also use it, when you have event producers like SAP S/4HANA who can connect to your broker via AMQP/MQTT natively.

Please consider there are scenarios, where both components make sense (e.g. SAP Cloud Integration together with SAP Event Mesh (via AMQP-Adapter) or Kafka (via Kafka-Adapter)) and you can use the best of the 2 components together.