SAP Cloud Integration does not store message payloads by default (queueing). In case an error occurs (mapping, routing, connectivity), we have to design the desired behavior ourselves.

This document describes the available options. Of course it makes sense to add error handling for each flow (if you need iFlow-specific processing or alerting), but this is not discussed here. Please note that you can perform a monitoring with alert notifications outside of the iFlows using WHINT Interface Monitoring.

For synchronous connectivity it is simple: The errors are immediately sent back to the calling/sending system. No persistency or message queueing is required as error messages have to be handled outside of our integration flows.

(REST/OData/SOAP) APIs are mainly integrated synchronously, whereas Queue-based messaging is an asynchronous integration pattern. Asynchronous Message Queueing is a preferred way to enable decoupled integrations between applications (not only because queueing contains 5 vocals in a row)…

For asynchronous connectivity we have several options, depending on the sender adapter used:

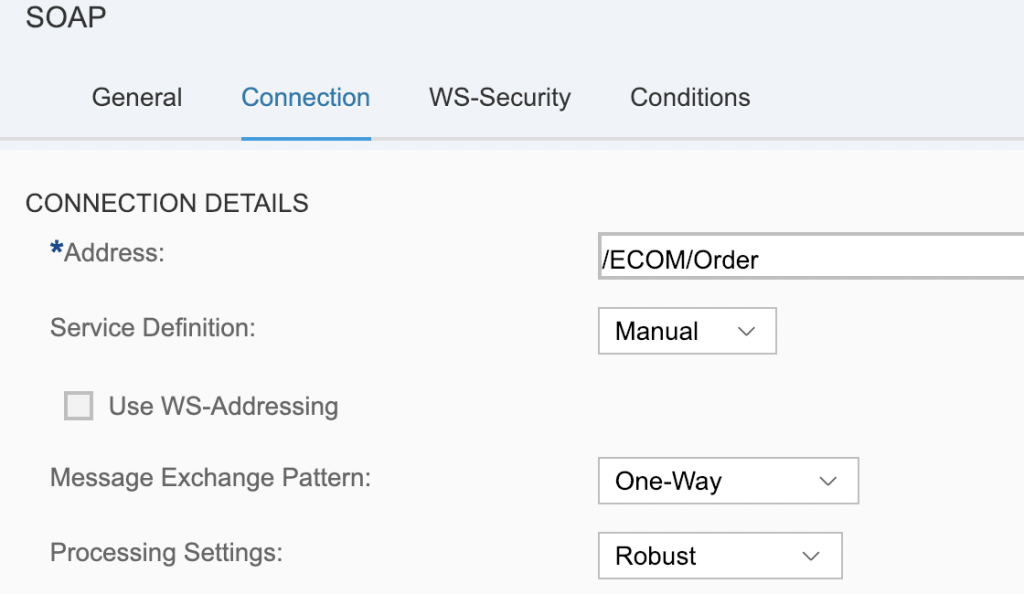

- SOAP (1.x)

- You can change the processing settings to “Robust”. This will execute all steps of the iFlow in a synchronous mode until the message processing is successful. Then the sender will receive a HTTP 2xx code (OK). If an error occurs at any point, the sender will be informed through a HTTP 500 code (server error) and the sender must queue and organize the retry mechanisms.

- The alternative is processing setting “WS standard”: When the message arrives completely inside the iFlow, the sender will be notified with a success message and the CPI processing starts. If any error occurs and nothing is done to enable queueing/persistency, the message will be lost and no retry will be possible. (of course the sender system may be able to manually resend a message which was apparently already successfully processed).

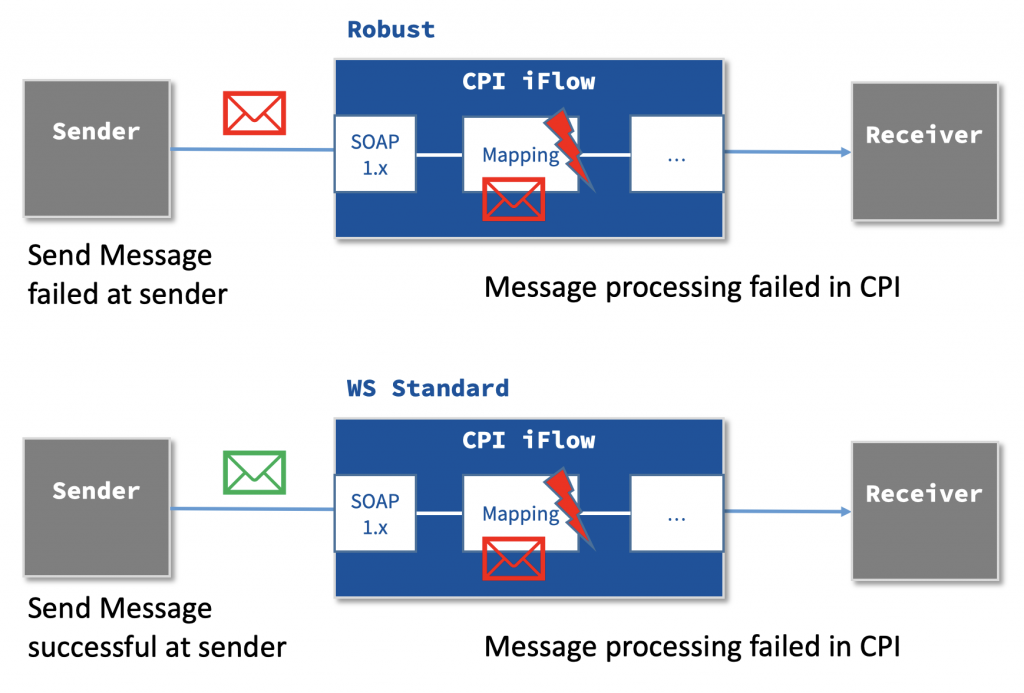

- XI (ABAP Proxy) and AS2

- Both adapters are working event-based through an incoming message, however (when selecting EO mode) the message is persisted temporarily in a storage: Data Store (JDBC based) or Queue (JMS based). This is the most convenient way of integration as it provides us with maximum flexibility:

- The sender system was able to transfer the message successfully and the middleware is now in charge

- Messages in the Data Store or Queue are being reprocessed periodically

- To resolve the situation manually (if the error is not temporary, e.g. due to a network issue, but permanent): Delete message from Data Store or Queue

- For Queues there is even a more convenient way, as JMS provides Dead-Letter Queues (DLQ). Messages which are failing repeatedly to be processed are moved automatically to a DLQ where manual actions can be triggered (e.g. notifying a responsible person).

- Both adapters are working event-based through an incoming message, however (when selecting EO mode) the message is persisted temporarily in a storage: Data Store (JDBC based) or Queue (JMS based). This is the most convenient way of integration as it provides us with maximum flexibility:

- For all other Push based Sender Adapters (sender system triggers message processing) a queueing must be implemented manually (see below)

- IDoc, HTTP, SOAP (RM)

- ProcessDirect adapter is not listed here, as the communication is only used internally within CPI, however the same principles apply: no standard queueing available

- For all Pull Sender Adapter (CPI triggers message processing with a periodic frequency): a queueing exists implicitly – the message remains at its source location until the processing is completed successfully

- SFTP, Mail, SuccessFactors, Ariba

- Event-based Sender Adapters work in a Push/Pull approach. Messages arriving in a queue/topic will be processed immediately (event-based) from an event subscription

- AMQP, Kafka, RabbitMQ*, AliyunMNS**

How to define Manual Queueing

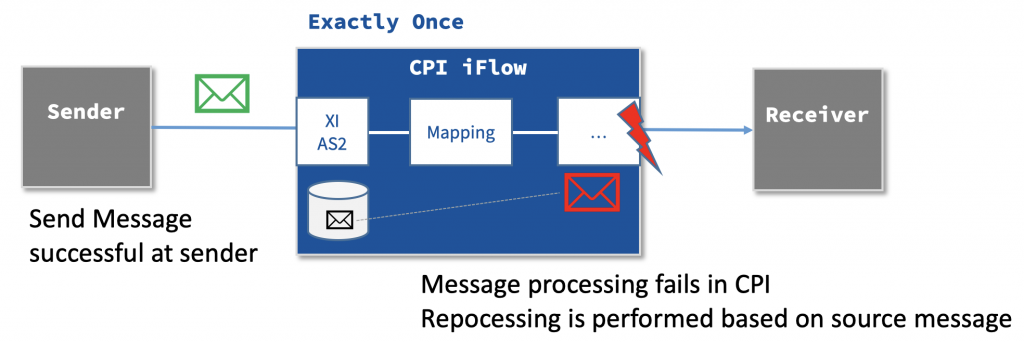

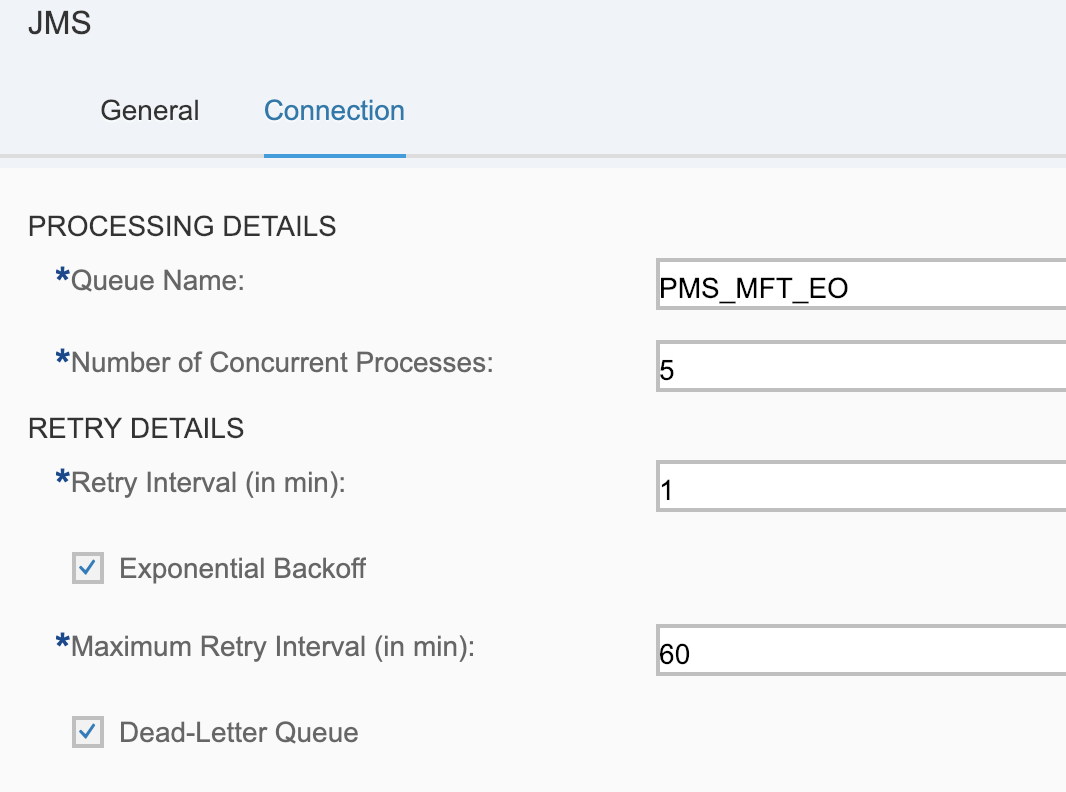

- JMS adapter: Queues

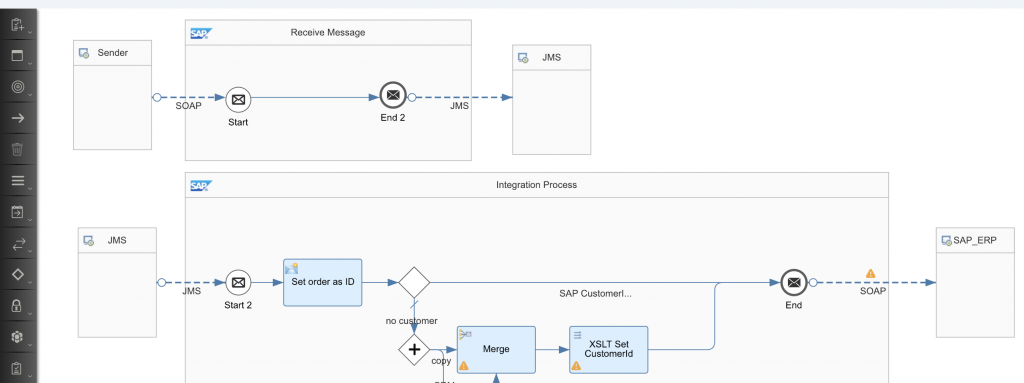

- The easiest way to ensure a proper queueing is using the JMS adapter (requires CPI Enterprise Edition or at least Enterprise Messaging)

- This is an internally used adapter, you can only send/receive messages though JMS queues within your CPI tenant locally.

- The approach is to persist all incoming messages into a queue first (with the unchanged messages) and then have a second Integration Process polling from the queue and processing the messages. Like this, the approach works like the XI or AS2 adapter, but with an additional Integration Process. Luckily this can be placed in the same iFlow. The messages remain in the queue until the processing of the message is successful.

- When other iFlows are called (via ProcessDirect adapter), you have to make sure that the transaction handling is using JMS, not JDBC.

- Great is the exponential backoff (poll-interval in doubled with each attempt) and Dead-Letter Queue handling

- The only disadvantage is the limited number of queues (28) available… Setting this up for each interface will soon use all available queues. However, you can add additional queues by increasing your subscription.

- Check out Mandy´s (SAP) blog on Async Messaging with JMS for more details

- Data Store (JDBC)

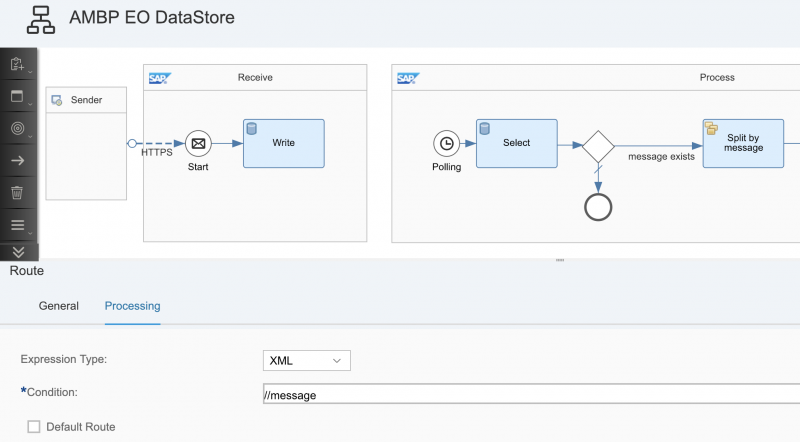

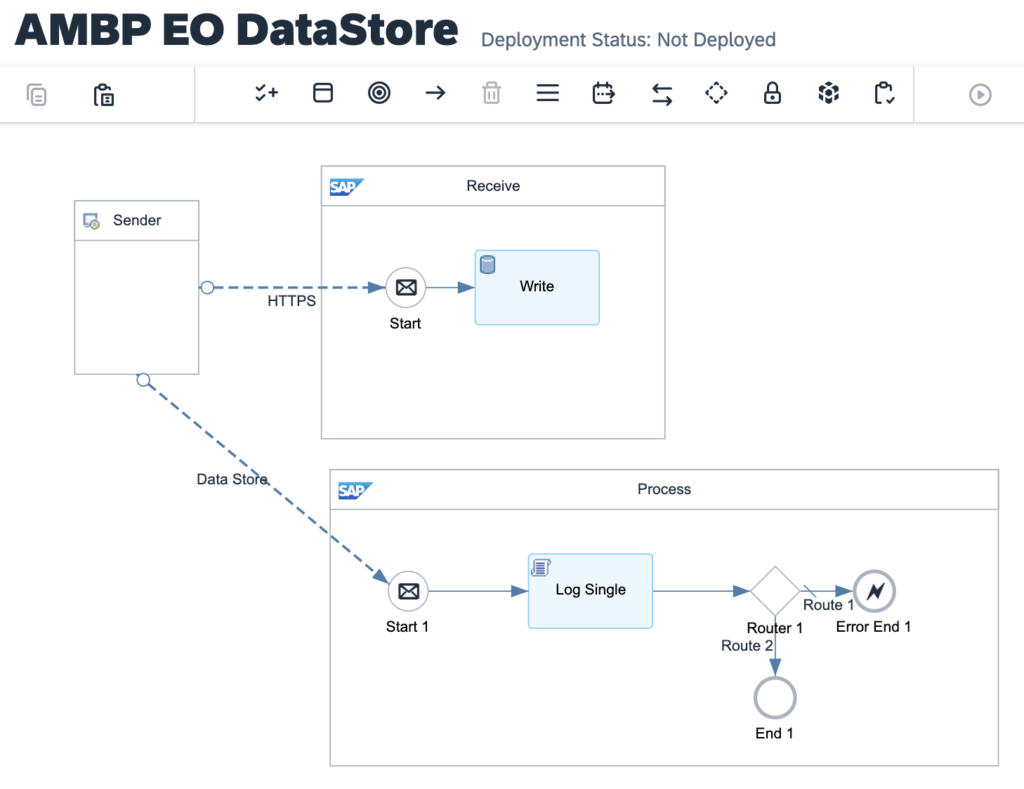

- Using the Data Store we can also achieve queuing without the limitations above (we can have many, many Data Stores).

- The approach is similar to the JMS one, but we have to manage the processing more manually, see the example below.

- If the first message in the queue fails, all following messages remain in the queue untouched. Therefore you should design the Select in batch mode (to parallelize processing) and then iterate by message with a splitter

- Limitations

- Unlike the JMS approach, you will see a message for each poll interval, even if no entry is inside the Data Store. We have to use the gateway toi check if a message exists or not

- The Select Operation only supports XML. In case you want to transfer Non-XML (binary, text, ..), you have to Base64-encode the payload and add an XML tag around with a Content Modifier Step.

- The Select Operation reads the set of messages randomly (e.g. 100 messages), not using a FIFO approach. Like this, blocking messages can stop the interface entirely, even if some messages could be processed successfully.

- Metadata (e.g. source file name) would have to be stored in the entry id as the data store only persists the body.

Meanwhile you can use the Datastore Sender Adapter which removes many downsides, but we recommend using JMS anyway as you can easily RETRY queued messages at your convenience (see podcast episode).

Summary

Each interface is unique and has its own requirements. SAP Cloud Integration provides great flexibility here and we ideally work with integration patterns (helping us to solve requirements in a standardized way). However, for asynchronous integration with queueing, there is no perfect pattern available yet, unless you can use XI or AS2 sender adapter. A good alternative is the SOAP 1.x (robust) adapter to push back the errors as well as the polling adapters where the message remains where it is until the processing is complete. The 2-step approach of the JMS queues also provide an excellent way, but the amount of queues is still limited. Until this limitation is removed by SAP, we can use the DataStore…

| Type | Adapter | Queueing |

|---|---|---|

| Push | SOAP 1.x | Robust Mode possible: errors are pushed back to sender (messages remain at source in case of errors) |

| Push | IDoc, HTTP, SOAP (RM) | Queueing must be implemented manually |

| Push | XI, AS2, AS4 | Queueing available (with Data Store/JMS) |

| Pull | SFTP, Mail, SSFS, Ariba, FTP | Messages remain at source location in case of error |

| Event-based Pull from Queue/Topic | AMQP, Kafka, RabbitMQ*, AliyunMNS** | Messages remain at source location in case of error or move to Dead-Letter Queue |

* RabbitMQ = WHINT RabbitMQ Adapter, ** AliyunMNS = WHINT AlibabaCloud MNS Adapter